Strange World

Disney Animation’s fall 2022 release is Strange World, which is the studio’s 61st animated feature, and third original story in as many years. Strange World takes us to the land of Avalonia, a realm surrounded by impenetrable mountains and home to a society that blends elements of early 20th century pulp fiction, steampunk, and environmental solarpunk. The core story of Strange World revolves around father-son relationships and is exactly the type of story that Disney Animation excels at: something personal and relatable but set in a fantastic world we’ve never seen before. That “never seen before” aspect made my two years of working on rendering technology for Strange World an interesting time indeed!

In my writeups about our films, two recurring themes always are: 1. with each film, we build upon advancements made and lessons learned from the previous film, and 2. one of the greatest advantages that having in-house tools gives us is the ability to customize and build exactly what each film’s story and art direction requires. Strange World’s production exemplifies both of these themes; so much of what we had to do on Strange World builds upon things we learned from and developed for previous films that I’m not entirely sure we would have been able to make Strange World a few years ago, and much of what we learned we have only been able to apply as effectively as we have because we have the ability to extend and improve our own tools.

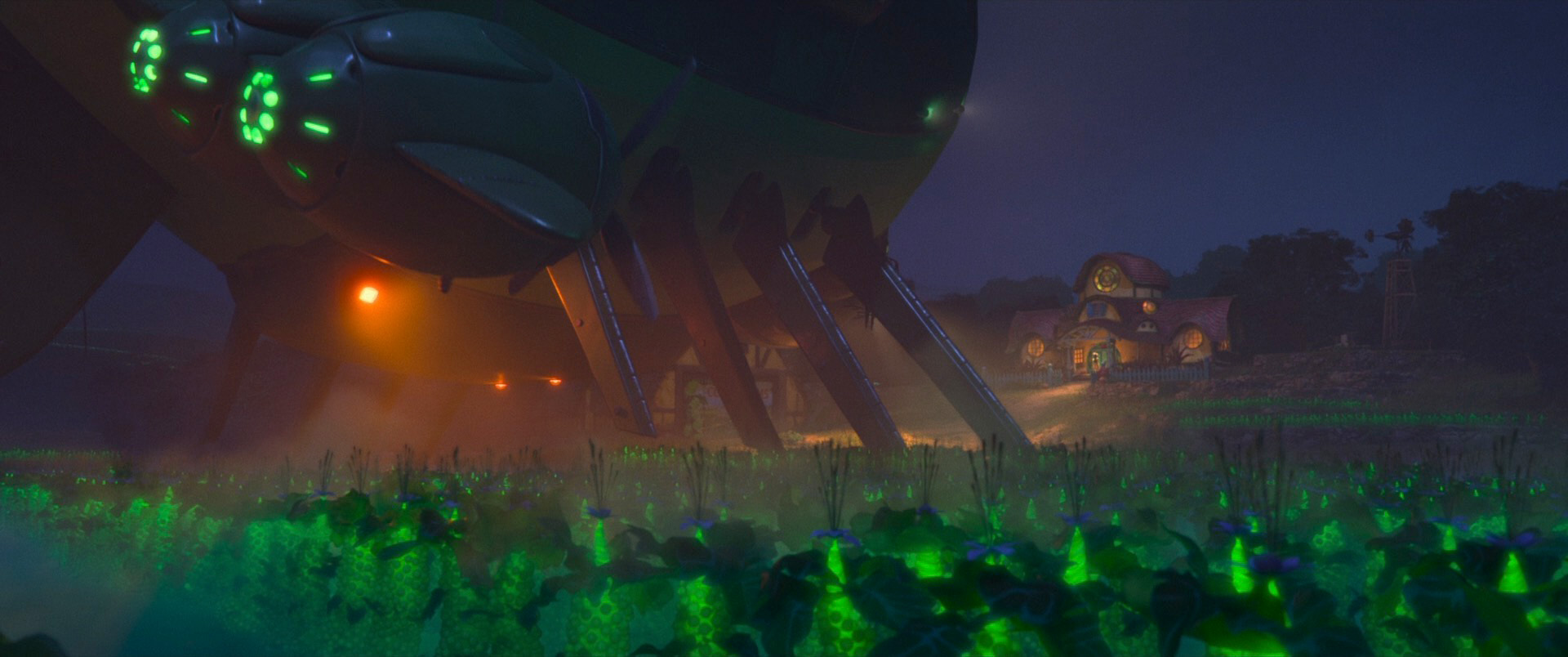

As an example: a large part of Strange World takes place on the airship Venture, which from a production pipeline perspective has to function as both a set/environment in which characters move and interact, and as a sort of character of its own as it moves around in the larger surrounding environment. In CG production pipelines, various pipeline optimizations are often built around the reasonable assumption that sets are relatively static; sets typically don’t need complex animation rigs and can be used as a stationary frame of reference for all kinds of different things. The Venture, of course, breaks all of these expectations. Disney Animation had to deal with this type of scenario before on Moana, where much of the movie is set on a canoe out at sea, so handling the “sets as characters” challenge wasn’t new. Instead of having to solve this problem from scratch, our artists and TDs were able to build on top of what they had learned before to enable the Venture to be a far more complex “set as a character” than anything we had done before. In fact, the Venture isn’t the only case of this type of challenge in Strange World! Huge parts of Strange World follow this “set as a character” pattern; entire chunks of terrain get up and walk around in this movie! All of these complex sets were made possible by advancements [Vo et al. 2023] in our USD based pipeline, which in turn built upon all of the lessons learned [Miller et al. 2022] from our previous pipeline.

Things got even more complex once crowds were brought into the mix too. Strange World has some of the most massive crowd simulation ever made by Disney Animation [Devlin et al. 2023], and these huge crowds had to interact with the Venture and complex terrain. One of the main tools our crowds team used to guide giant swarms of creatures traveling through Strange World’s massive environments and around the Venture originated as a tool made for a single character on Frozen 2, and had to be turbocharged to massive scales to go from handling the requirements for one character to handling thousands upon thousands of creatures [Lin et al. 2023]. Challenges involving simulating collisions in huge crowds like this, along with similar challenges in hair simulation, helped inspire further research work [Zhang et al. 2023] for future films as well.

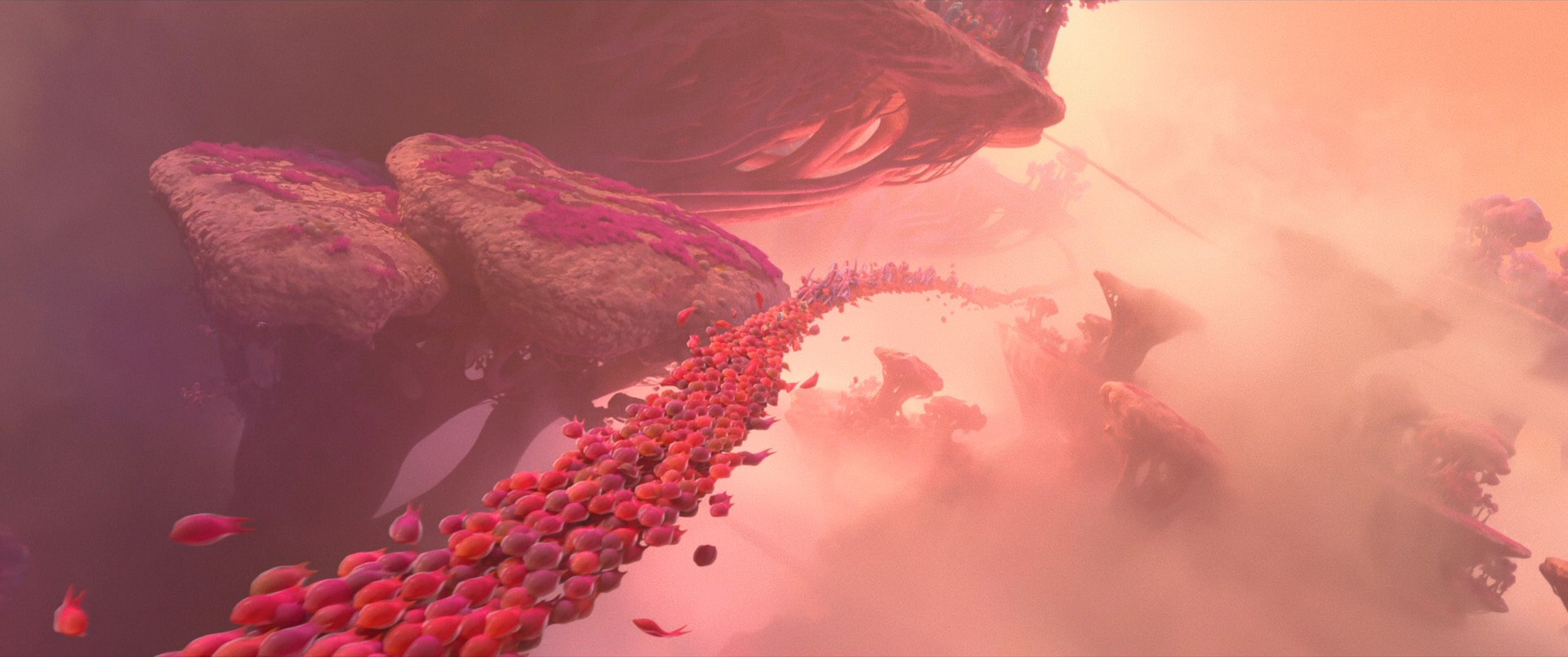

Once the story moves into the subterranean world, the environment of the film ratchets up in production complexity on multiple different axes. Essentially every single surface in the subterranean world has significant subsurface scattering since everything is made up of organic gummy materials, and of course all of the giant crowds also all have subsurface scattering. Many of our previous films already were beginning to push the use of subsurface scattering in environments for things like plants and plastics and other materials, all thanks to the work that the rendering team put into making path traced subsurface scattering efficient and controllable enough for large-scale production usage [Chiang et al. 2016], but Strange World saw the widest usage of subsurface scattering in environments yet, by far.

Everything we’ve learned about controlling subsurface scattering also proved to be extremely important for creating the look for the Splat character, who is essentially a giant immune cell. Splat wound up requiring a unique custom one-off shader with custom functionality in Disney’s Hyperion Renderer combining subsurface scattering, a custom faux volumetric emission technique, our multiple-scattering sheen solution [Zeltner et al. 2022], and more in order to achieve the target art-direction in a single render pass [Litaker et al. 2023]. Splat’s challenges weren’t limited to just rendering though; rigging and animating Splat also required novel solutions in order to handle how varied and multi-purpose Splat’s limbs are [Black and Pederson 2023]. Splat’s rig was only made possible through a combination of new novel techniques and a decade of experience and continuous improvement in Disney Animation’s DRig modular rig building system [Smith et al. 2012].

Splat wasn’t the only character that provided interesting technical challenges though; in fact, our entire character asset workflow got an upgrade on Strange World. Our standard character asset workflow saw three major improvements on Strange World: eyes, skin, and curves.

Strange World’s character art direction called for eyes to use a bit of a different look from Disney Animation’s usual style; eyes on Strange World have more of an oblong oval shape. Over the past several shows, we introduced a new eye shading model that incorporates manifold next event estimation for physically accurate iris caustics and limbal arcs [Chiang et al. 2018]; one of my smaller projects on Strange World was to help work out the minor modifications to this system that were required to support Strange World’s eye shapes.

For skin shading, Strange World uses the same fully path traced subsurface scattering approach [Chiang et al. 2016] (as opposed to older diffusion-based approaches [Burley 2015]) that we have now used for all of our movies over the past few years. However, Strange World has one of the most diverse casts of any of our recent films in terms of skin tones, and our lighting and look dev artists took special care to make sure all of the different skin types were depicted accurately and beautifully. Doing so required rebuilding our entire skin material from the ground up and radically rethinking our entire approach to lighting characters to better handle contrasting skin tones and high specularity skin [Khoo et al. 2023].

Previously on Encanto, our look artists started to replace triangle mesh-based geometric representations for cloth with curve-based fiber level representations [Velasquez et al. 2022]. This authoring approach was pushed to new limits on Strange World, where curve-based garments were extended to incorporate custom weave patterns and widely varying fiber thicknesses ranging from fine threads to thick yarns [Lipson and Velasquez 2023]. Humans weren’t the only type of characters on Strange World to see upgraded curve geometry though; the Clade family’s lovable dog, Legend, also required upgraded curve grooming techniques to produce one of the most complex animal grooms the studio has ever made [Chun et al. 2023]. Of course all of these improved authoring techniques meant increased curve rendering complexity, but interestingly, we didn’t actually need to improve anything in the renderer to handle the increased curve rendering demand. After having spent many prior shows improving Hyperion’s ability to chew through vast geometric complexity, on Strange World we found that Hyperion was able to just handle all of the meshes and curves that we threw at it!

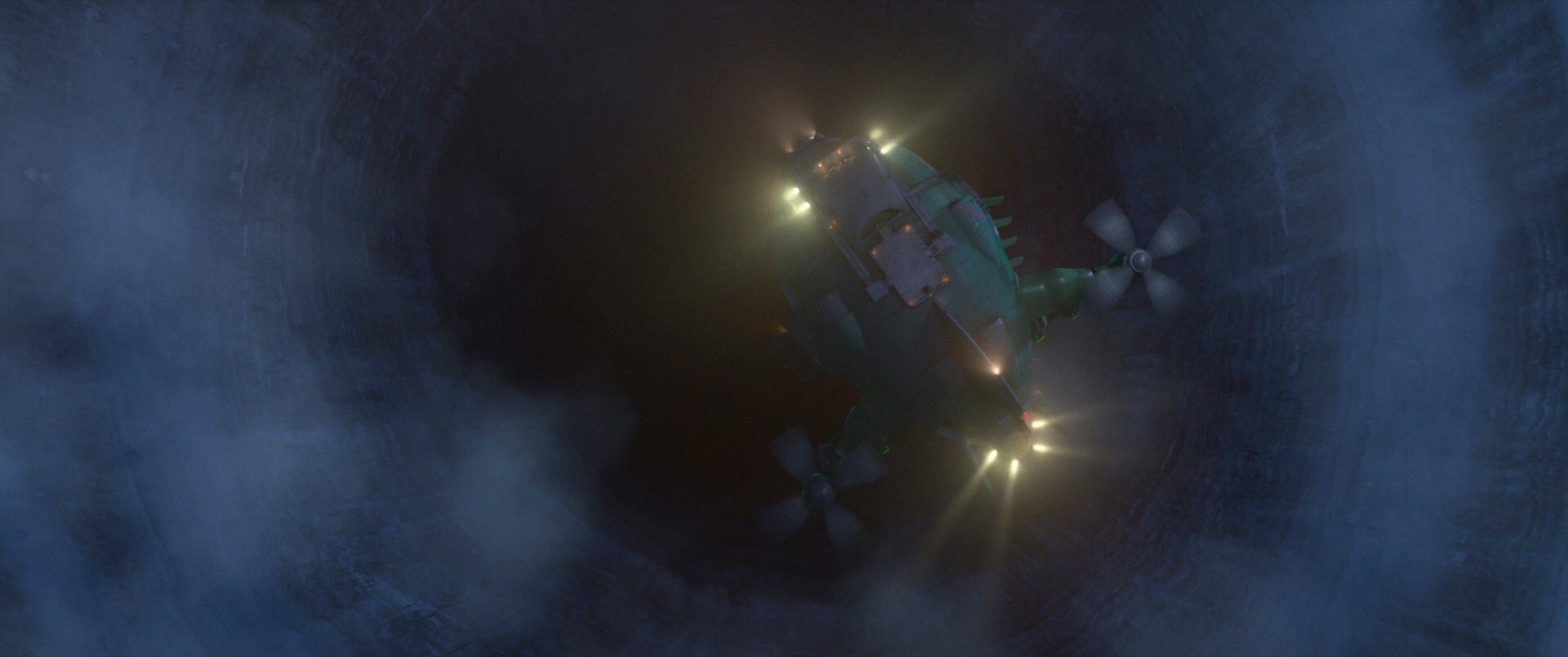

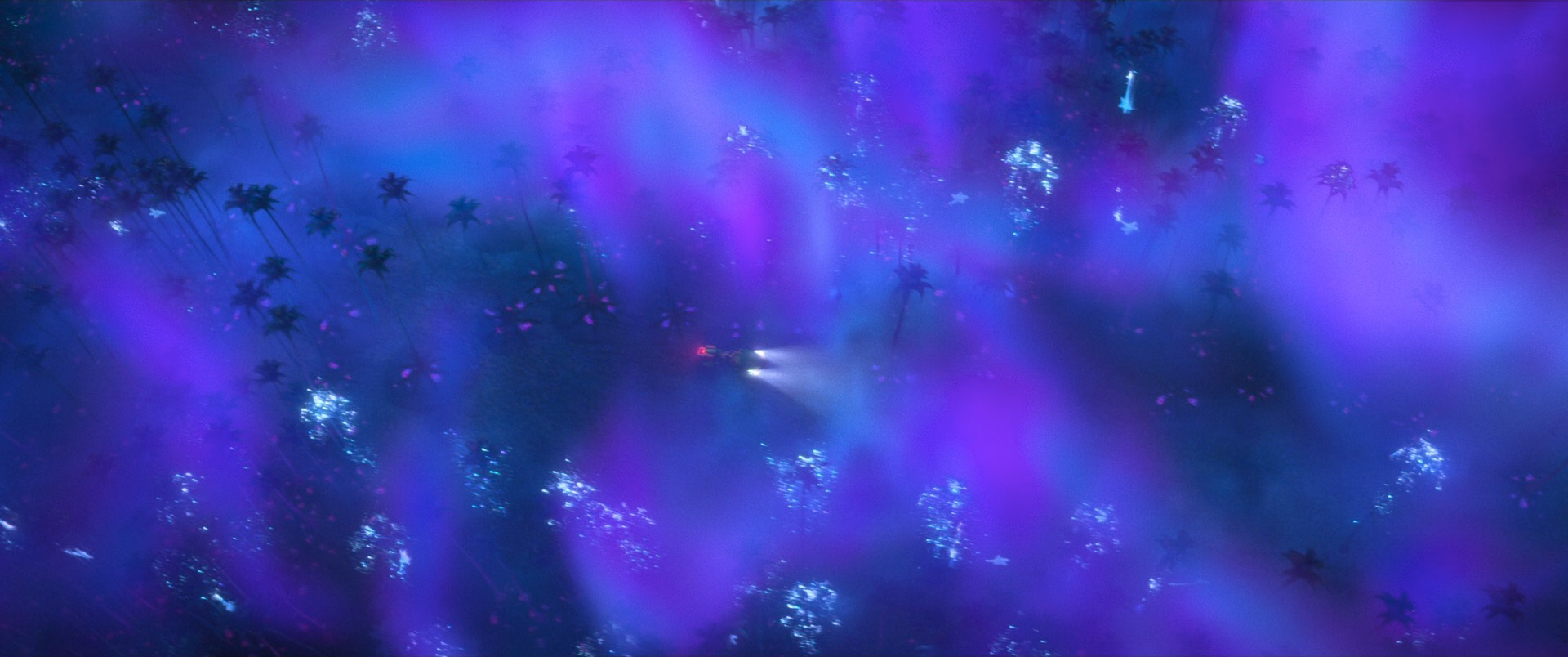

The hardest rendering challenge I worked on for Strange World was volume rendering. Strange World’s environments have some of the largest scale and most ambitious use of volumes in any of our films to date. Strange World extensively utilizes mist and atmospherics and low cloud cover to help convey a sense of mystery and to sell the sheer scale of the environments. Frozen 2 was the first movie that really extensively leveraged Hyperion’s modern volume rendering system (which we rewrote essentially from scratch during the early production of Ralph Breaks the Internet) and the first movie that introduced our modern volumes authoring workflow. This workflow, which is heavily based around quickly set-dressing atmospherics and clouds around environments by kitbashing together volumes from a large pre-made in-house library of VDBs, was further fleshed out on Raya and the Last Dragon and saw its largest and most complex usage yet on Strange World. Strange World also further extended our volume workflows with an evolved version [Navarro 2023] of the neural volume stylization tech we first introduced on Raya and the Last Dragon [Navarro and Rice 2021].

During Raya and the Last Dragon we consolidated various different experiments and techniques in our volume rendering system into a single unified volume integrator [Huang et al. 2022] that can efficiently handle every imaginable type of volume effect, so the challenge presented by Strange World’s volumes wasn’t so much light transport as it was simply a problem of efficiency at scale. When volumes are simultaneously highly detailed but also span kilometers of world space, massive memory usage becomes challenging, even with instancing. Also, super large and detailed volumes coverage means that average path length in volumes can get very long, exposing any potential performance issues in the volume integrator. A huge part of my time on Strange World was spent optimizing our volume integrator. There were no clever shortcuts or brilliant solutions here, just tons of profiling and careful analysis of the existing system architecture and hard low level optimization work.

We also noticed during Strange World that artists sometimes had to overauthor volume details in areas as a way to work around the lack of true procedural volumes evaluation support in our renderer. While Hyperion does support authoring procedural volumes, these procedural volumes are not actually evaluated at render time but instead are pre-evaluated and baked into a required underlying VDB grid at renderer startup. The reason for this limitation is fundamental to null collision-based volume rendering theory [Novák 2018]; null collision approaches only work if the bounding majorant (AKA max density) for all volumes in a region of space is known upfront. In theory we could just require artists to input a max density value that we would clamp all higher values down to, but such a value isn’t easy for artists to estimate in practice; too low of a value clamps away detail, while too high of value results in an overly loose bounding majorant, which in null collision theory-based volume rendering can result in significantly slower performance. Inspired by what we were seeing on Strange World, we kicked off a research project in collaboration with the Visual Computing Lab at Dartmouth College to solve this problem, with promising results [Misso et al. 2023]!

As usual, I’ve only written about the parts of making Strange World that I know a bit more about; hundreds of artists, TDs, and engineers worked to craft every frame of this movie and solve many many more problems. For the entire history of Disney Animation, one of the studio’s primary driving purposes has been to push the limits of animation as an art form, and Strange World is no exception to this rule. Strange World is the latest example of how each of our films builds upon what we’ve learned on previous films to push our filmmaking process forward, and as always, getting to be a part of this process is a lot of work but also a lot of fun!

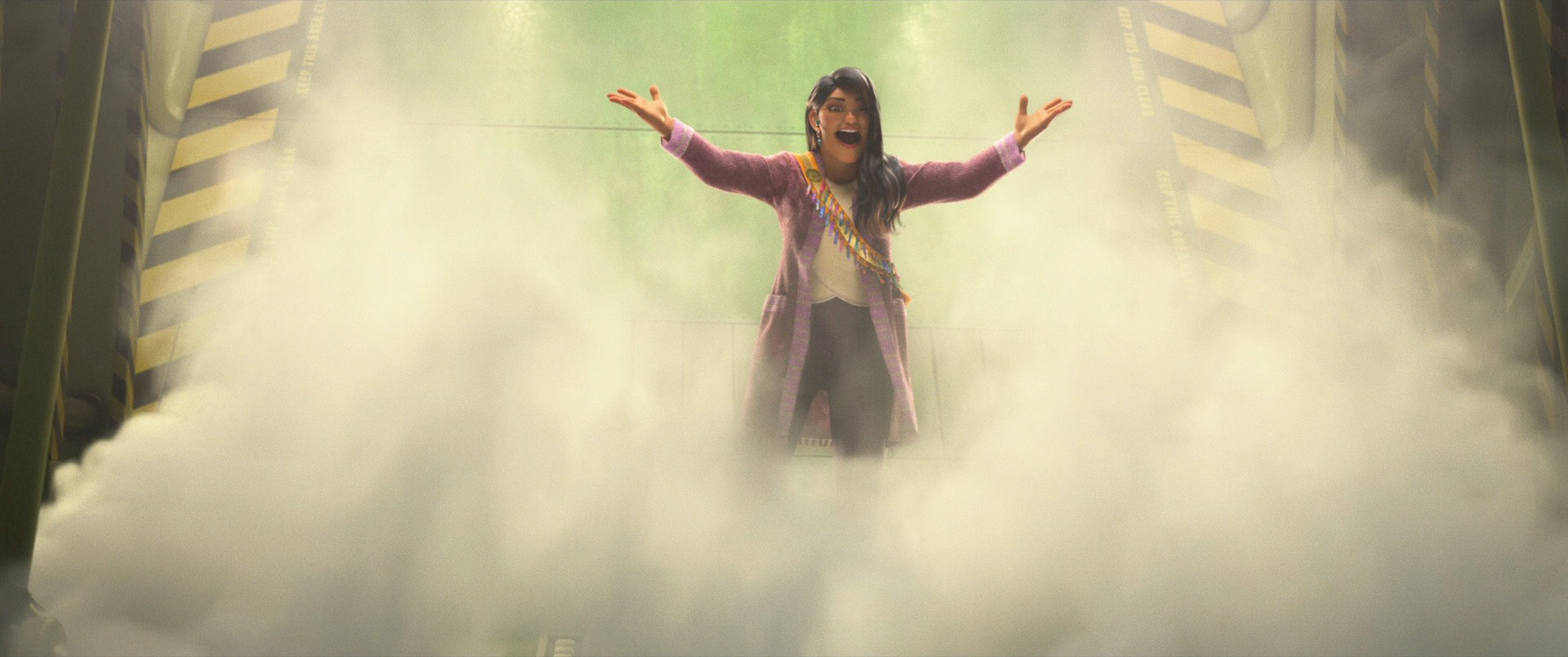

Below are some frames from the strange but gorgeous world of Strange World, pulled from the Blu-ray and presented in semi-randomized order to prevent giving away too much of the story. Go see Strange World on the biggest screen you can find!

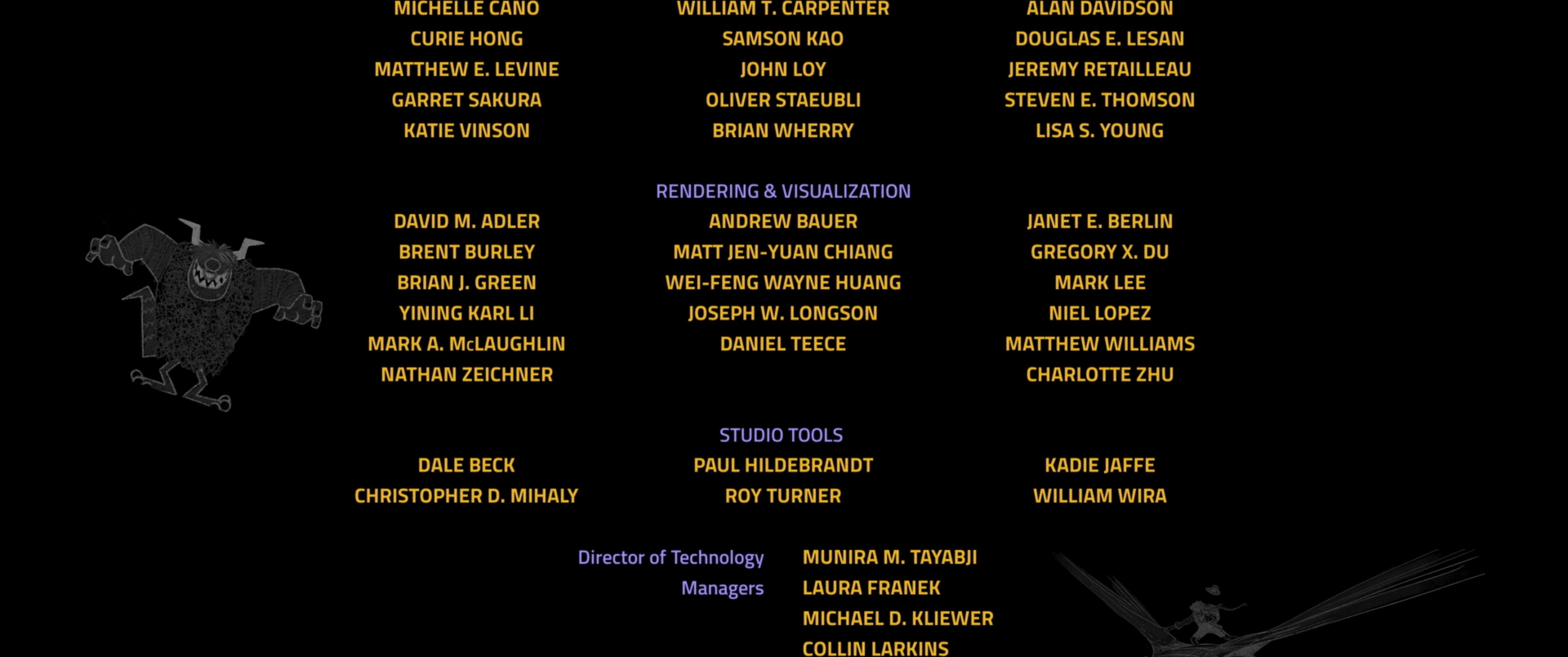

Here is the credits frame for the Hyperion team, which is listed as part of the larger Rendering & Visualization group at Disney Animation. In addition to the Hyperion team, this group also includes our sister render translation pipeline and interactive visualization teams:

All images in this post are courtesy of and the property of Walt Disney Animation Studios.

References

Cameron Black and Christoffer Pedersen. 2023. The Versatile Rigging of Splat in ‘Strange World’. In ACM SIGGRAPH 2023 Talks. Article 29.

Brent Burley. 2015. Extending the Disney BRDF to a BSDF with Integrated Subsurface Scattering. In ACM SIGGRAPH 2015 Course Notes: Physically Based Shading in Theory and Practice.

Matt Jen-Yuan Chiang, Peter Kutz, and Brent Burley. 2016. Practical and Controllable Subsurface Scattering for Production Path Tracing. In ACM SIGGRAPH 2016 Talks. Article 49.

Matt Jen-Yuan Chiang and Brent Burley. 2018. Plausible Iris Caustics and Limbal Arc Rendering. In ACM SIGGRAPH 2018 Talks. Article 15.

Courtney Chun, Jose Velasquez, and Haixiang Liu. 2023. Creating the Art-directed Groom for Legend in Disney’s Strange World. In ACM SIGGRAPH 2023 Talks. Article 7.

Nathan Devlin, Yasser Hamed, Alberto J Luceño Ros, Jeff Sullivan, and D’Lun Wong. 2023. Creating Creature Chaos: The Methods That Brought Crowds to the Forefront on Disney’s ‘Strange World’. In ACM SIGGRAPH 2023 Talks. Article 34.

Wei-Feng Wayne Huang, Peter Kutz, Yining Karl Li, and Matt Jen-Yuan Chiang. 2021. Unbiased Emission and Scattering Importance Sampling for Heterogeneous Volumes. In ACM SIGGRAPH 2021 Talks. Article 3.

Mason Khoo, Dan Lipson, and Jose Velasquez. 2023. Lighting and Look Dev for Skin Tones in Disney’s “Strange World”. In Proc. of Digital Production Symposium (DigiPro 2023). Article 5.

Andy Lin, Hannah Swan, Justin Walker, Cathy Lam, and Ricky Arietta. 2023. Swoop: Animating Characters Along a Path. In ACM SIGGRAPH 2023 Talks. Article 45.

Dan Lipson and Jose Velasquez. 2023. Creating Curve-based Garments With Custom Weave Patterns. In ACM SIGGRAPH 2023 Talks. Article 18.

Kendall Litaker, Brent Burley, Dan Lipson, and Mason Khoo. 2023. Splat: Developing a ‘Strange’ Shader. In ACM SIGGRAPH 2023 Talks. Article 28.

Tad Miller, Harmony M. Li, Neelima Karanam, Nadim Sinno, and Todd Scopio. 2022. Making Encanto with USD: Rebuilding a Production Pipeline Working from Home. In ACM SIGGRAPH 2022 Talks. Article 12.

Zackary Misso, Yining Karl Li, Brent Burley, Daniel Teece, and Wojciech Jarosz. 2023. Progressive Null-tracking for Volumetric Rendering. In Proc. of SIGGRAPH (SIGGRAPH 2023). Article 31.

Mike Navarro and Jacob Rice. 2021. Stylizing Volumes with Neural Networks. In ACM SIGGRAPH 2021 Talks. Article 54.

Mike Navarro. 2023. Diving Deeper Into Volume Style Transfer. In ACM SIGGRAPH 2023 Talks. Article 39.

Jan Novák, Iliyan Georgiev, Johannes Hanika, and Wojciech Jarosz. 2018. Monte Carlo Methods for Volumetric Light Transport Simulation. Computer Graphics Forum (Proc. of Eurographics) 37, 2 (May 2018), 551-576.

Greg Smith, Mark McLaughlin, Andy Lin, Evan Goldberg, and Frank Hanner. 2012. DRig: An Artist-Friendly, Object-Oriented Approach to Rig Building. In ACM SIGGRAPH 2012 Talks. Article 18.

Jose Velasquez, Alexander Alvarado, Ying Liu, and Maryann Simmons. 2022. Embroidery and Cloth Fiber Workflows on Disney’s “Encanto”. In ACM SIGGRAPH 2022 Talks. Article 22.

Emily Vo, George Rieckenberg, and Ernest Petti. 2023. Honing USD: Lessons Learned and Workflow Enhancements at Walt Disney Animation Studios. In ACM SIGGRAPH 2023 Talks. Article 13.

Tizian Zeltner, Brent Burley, and Matt Jen-Yuan Chiang. 2022. Practical Multiple-Scattering Sheen Using Linearly Transformed Cosines. In ACM SIGGRAPH 2022 Talks. Article 7.

Paul Zhang, Zoë Marschner, Justin Solomon, and Rasmus Tamstorf. 2023. Sum-of-squares Collision Detection for Curved Shapes and Paths. In Proc. of SIGGRAPH (SIGGRAPH 2023). Article 76.